The synthesis of scientific shreds of evidence: a critical appraisal on systematic review and meta-analysis methodology

Introduction

Evidence synthesis has a long history. While recognising that the methods of evidence-based disciplines are exchangeable, it should likewise be acknowledged that various areas of application hold on distinct methodological tasks (1). Synthesising results across studies to recognise the causes of variation in outcomes and to reach an overall understanding of a problem is a crucial part of the scientific method. Until in recent times, the results of scientific findings have been summarised in narrative reviews where the summary of transparent and objective results have become increasingly difficult. Systematic reviews and meta-analyses, conducted by subsequent strict protocols to guarantee reproducibility and decrease bias, have become more common in the synthesis of evidence. Systematic reviews of randomised controlled trials (RCT) is usually judged the highest level of evidence for the comparative efficacy of interventions. Systematic reviews could be combined with meta-analysis to examine the reasons of difference among effect sizes (study outcomes) and to evaluate the magnitude of the outcome throughout critical primary studies. Meta-analysis is a statistical method for quantitatively synthesising similar studies from a systematic review (2).

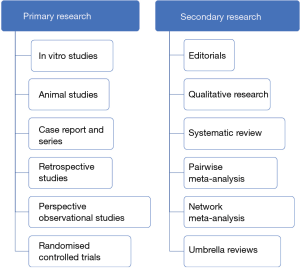

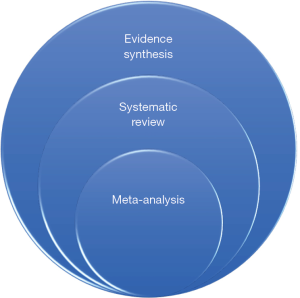

On the contrary, narrative reviews helped investigate the development of particular projects and for advancing conceptual frameworks (3). A precise pyramid in clinical evidence has been agreed upon, basic science experiments, case series, cross-sectional studies, case-control studies, cohort studies, and RCT. This pyramid of primary research is directly reflected by grading in evidence synthesis (secondary research), with qualitative reviews, systematic reviews, meta-analysis (Figure 1). An additional level of research (tertiary research) comprises umbrella reviews, overviews of reviews, and meta-epidemiologic studies (4). Systematic reviews and meta-analysis of RCT are the highest levels of evidence. However, systematic reviews and meta-analysis are two methods under the more comprehensive evidence synthesis (Figure 2) (5).

Systematic review

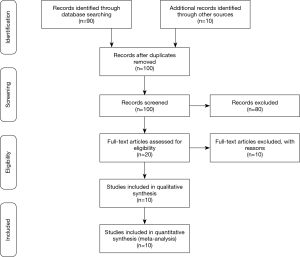

The systematic reviews process involves the utilisation of strict methodological guidelines for the literature search, study screening (as well as critical appraisal of eligible studies matching pre-defined criteria), data extraction, and coding. Software, protocols and reporting guidelines for systematic reviews and meta-analysis are well established. Preferred Reporting Items for systematic reviews and meta-analysis (PRISMA) is an evidence-based minimum set of items for reporting in systematic reviews and meta-analysis (http://www.prisma-statement.org/). PRIMA includes a checklist of 27 items and a template flow chart for the presentation of an systematic reviews (the PRISMA flow diagram) (Figure 3) (3). The semantic properties are crucial during systematic reviews because the academic search engines ignore semantic connections between concepts. Therefore, synonyms could decrease search-term sensitivity by hampering the insertion of essential concepts. Homonyms cannot be immediately excluded from keyword-based searches, but it could be avoided the problem with synonyms by identifying and including as additional search terms.

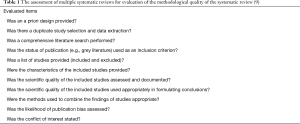

Further, the small-world property of semantic networks acts similarly to homonymy increasing the number of unrelated hits sent by search engines. Consequently, there will be some subjects not amenable to an efficient systematic review. As an alternative, manual sorting may be the only way to guarantee systematic inclusion of relevant information, although with the risk of fatigue-induced bias. Fortunately, some forms of existing data could be alternatives for semantic information in the article-identification stage of the systematic reviews process. First, expert knowledge provides an excellent source of information on the relatedness of different concepts. Secondly, examining the citation lists of pertinent articles is a valuable technique for identifying articles needing search keywords. Lastly, innovative search engine algorithms seek to intended meanings in page searches, and, in future, the use of semantic information will increase (7). PICO is utilised to build a meaningful and clear question when searching for quantitative evidence. Searching for evidence is a three-step process: (I) exploratory search; (II) implement a checked search strategy within each selected database; (III) review the references of retrieved studies (8). Before beginning systematic review, it is essential to register the systematic reviews or meta-analysis in the International prospective register of systematic reviews (PROSPERO) to ensure that the review being planned has not previously been performed or is at this time being updated (https://www.crd.york.ac.uk/PROSPERO/). An excellent clinical question should have been formatted in a PICO model with four essential factors: (I) the patient or problem in question; (II) the intervention of interest; (III) the comparison; (IV) the outcomes of interest. The evaluation of multiple systematic reviews is utilised for the assessment of the methodological quality of systematic reviews (Table 1) (9).

Full table

Meta-analysis

Meta-analysis assesses the evidence for the efficiency of explicit interventions for a problem or hypothesised underlying associations for a condition in the small number of studies (<25) and reach broad generalisations across more significant numbers of study is differences in goals affect every step of the synthesis of research, from inclusion criteria of the study to the statistical methodologies. Meta-analysis is utilised to combine evidence across studies (detection of effects), to assess their magnitudes and variation, and to analyse the components of influence (moderators or covariates). When the objective is to evaluate evidence for particular interventions, the aim of meta-analysis is principally on precisely assessing an overall mean effect and may involve identifying factors modifying the effect. Therefore, this meta-analysis must accurately and clearly define the population in question and subsequently, the results could only be applied to that population (3). Interpreting the results and drawing conclusions from meta-analysis should be taken with care. The conclusions should depend on the outcomes. The clinical utility of interventions can be better assumed if considered outcomes of effectiveness and safety (2). The inclusion of crossover trials into an meta-analysis has not been addressed with empirical data based on paired analysis (10). Odds ratios, relative risks, risk differences, could all be created from a binomial model. Odds ratios are frequently applied because of their statistical stability, even if caution should be done against free usage of odds ratios as risk estimators.

Nevertheless, odds ratios and relative risks are comparable in uncommon events. Both odds ratios and relative risks ignore the duration of follow-up, and hazard ratios should be preferred (more reliable) in not regular follow-up (4). R can also be employed for meta-analyses, offering valuable tools for sensitivity analyses (11).

Limitations

The quality of synthesis differs on the quality of the included findings; therefore, the methodological quality of the selected studies must be evaluated before the inclusion. The evaluation and the reasons for the rejection must be reported in the manuscript. Due to limitation in tables and graphs in manuscripts, proper visual illustration (funnel plots) and statistical analyses of publication bias (Egger’s regression) are lacking (12). A key question guiding critical assessment is whether the selected research methods have been utilised probably with accurate results. A critical appraisal is a requirement for the transferability of the results (13). Systematic reviews and meta-analysis are statistical and scientific, not magical techniques. They could highlight areas where evidence is lacking, but they cannot surmount these weaknesses. Other challenges for meta-analysis and systematic reviews include research and publication bias, the over or underrepresentation of populations, which biased the view of the entirety. The use of statistically flawed approaches can lead to erroneous and misleading results. Regrettably, the term meta-analysis is often misused regardless of the rigour of the methodology. The term should be applied only to studies that use well-established statistical procedures, such as weighting and heterogeneity analysis, appropriate effect-size calculation, and statistical models that distinguish the hierarchical structure of meta-analysis data, or to studies that develop rigorously justified methodological advances (3). Potentially relevant studies could be missing from an meta-analysis. Despite methodologists’ best efforts to locate all satisfactory evidence, the most comprehensive searches miss the so-called grey literature (dissertations, conference abstract, book chapters, policy documents). However, the impact of grey literature on the meta-analysis conclusions has not been exhaustively explained (14). The best meta-analysis should always aim to present meaningful and clinically relevant analyses of the available data (15).

Conclusions

Creating robust, flexible ways to synthesise scientific evidence is an ongoing challenge to maximise the effectiveness of scientific investigation (3). The grading and the assessment of evidence are equally crucial so that stakeholders could make well-informed decisions (5). The highest level of evidence can be derived from systematic reviews and meta-analyses. However, poorly or inadequately constructed studies could give incorrect results and fail to inform clinical practice.

Acknowledgments

Funding: This work was partially supported by the Italian Ministry of Health with Ricerca Corrente and 5×1,000 funds.

Footnote

Provenance and Peer Review: This article was commissioned by the Guest Editors (Mario Nosotti, Ilaria Righi and Lorenzo Rosso) for the series “Early Stage Lung Cancer: Sublobar Resections are a Choice?” published in Journal of Thoracic Disease. The article was sent for external peer review organized by the Guest Editors and the editorial office.

Conflicts of Interest: Both authors have completed the ICMJE uniform disclosure form (available at http://dx.doi.org/10.21037/jtd.2020.03.07). The series “Early Stage Lung Cancer: Sublobar Resections are a Choice?” was commissioned by the editorial office without any funding or sponsorship. LB serves as an unpaid editorial board member of Journal of Thoracic Disease from Jan 2016 to Dec 2021. LS has no other conflicts of interest to declare.

Ethical Statement: The authors are accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Open Access Statement: This is an Open Access article distributed in accordance with the Creative Commons Attribution-NonCommercial-NoDerivs 4.0 International License (CC BY-NC-ND 4.0), which permits the non-commercial replication and distribution of the article with the strict proviso that no changes or edits are made and the original work is properly cited (including links to both the formal publication through the relevant DOI and the license). See: https://creativecommons.org/licenses/by-nc-nd/4.0/.

References

- Stewart GB, Schmid CH. Lessons from meta-analysis in ecology and evolution: the need for trans-disciplinary evidence synthesis methodologies. Res Synth Methods 2015;6:109-10. [Crossref] [PubMed]

- Rouse B, Chaimani A, Li T. Network meta-analysis: an introduction for clinicians. Intern Emerg Med 2017;12:103-11. [Crossref] [PubMed]

- Gurevitch J, Koricheva J, Nakagawa S, et al. Meta-analysis and the science of research synthesis. Nature 2018;555:175-82. [Crossref] [PubMed]

- Biondi-Zoccai G, Abbate A, Benedetto U, et al. Network meta-analysis for evidence synthesis: what is it and why is it posed to dominate cardiovascular decision making? Int J Cardiol 2015;182:309-14. [Crossref] [PubMed]

- Garas G, Ibrahim A, Ashrafian H, et al. Evidence-based surgery: barriers, solutions, and the role of evidence synthesis. World J Surg 2012;36:1723-31. [Crossref] [PubMed]

- Moher D, Liberati A, Tetzlaff J, et al. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. PLoS Med 2009;6:e1000097. [Crossref] [PubMed]

- Westgate MJ, Lindenmayer DB. The difficulties of systematic reviews. Conserv Biol 2017;31:1002-7. [Crossref] [PubMed]

- Oh EG. Synthesizing Quantitative Evidence for Evidence-based Nursing: Systematic Review. Asian Nurs Res (Korean Soc Nurs Sci) 2016;10:89-93. [Crossref] [PubMed]

- Bouter BJS, Jeremy MG, George AW, et al. Development of AMSTAR: a measurement tool to assess the methodological quality of systematic reviews. BMC Med Res Methodol 2007;7:10. [Crossref] [PubMed]

- Li T, Yu T, Hawkins BS, et al. Design, Analysis, and Reporting of Crossover Trials for Inclusion in a Meta-Analysis. PLoS One 2015;10:e0133023. [Crossref] [PubMed]

- Team RC. R: A Language and Environment for Statistical Computing. Vienna, Austria: R Foundation for Statistical Computing, 2018.

- Mak A, Cheung MW, Fu EH, et al. Meta-analysis in medicine: an introduction. Int J Rheum Dis 2010;13:101-4. [Crossref] [PubMed]

- Korhonen A, Hakulinen-Viitanen T, Jylha V, et al. Meta-synthesis and evidence-based health care--a method for systematic review. Scand J Caring Sci 2013;27:1027-34. [Crossref] [PubMed]

- Schmucker C, Bluemle A, Briel M, et al. A protocol for a systematic review on the impact of unpublished studies and studies published in the gray literature in meta-analyses. Syst Rev 2013;2:24.

- Barza M, Trikalinos TA, Lau J. Statistical considerations in meta-analysis. Infect Dis Clin North Am 2009;23:195-210. [Crossref] [PubMed]